SRCD 2019 Conversation Hour on Scientific Integrity, Transparency, & Openness

- Lisa Gennetian, NYU, Associate Editor Child Development

- Rick Gilmore, Penn State/Databrary.org

- Chuck Kalish, SRCD

- Catherine Tamis-LeMonda, NYU, SRCD Governing Council

- Carol Worthman, Emory, SRCD Governing Council

https://gilmore-lab.github.io/2019-03-22-SRCD-conversation/

Agenda

- On reproducibility

- SRCD responds

- Issues

- Addressing the issues

- Embracing an open future

- Continuing the conversation

On reproducibility

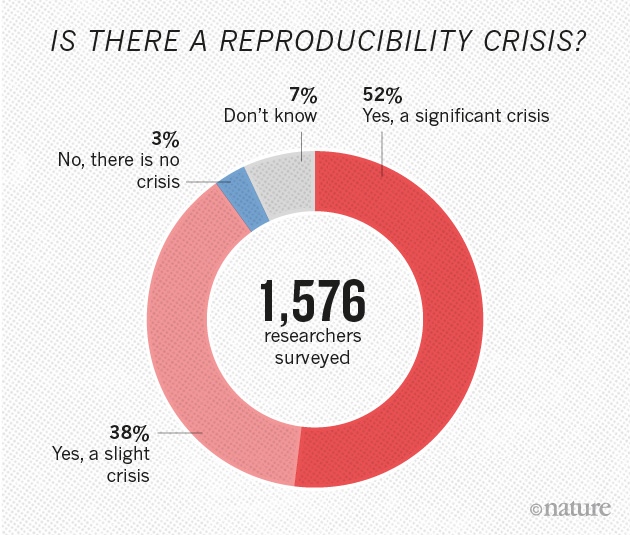

Is there a reproducibility crisis in science?

- Yes, a significant crisis

- Yes, a slight crisis

- No crisis

- Don’t know

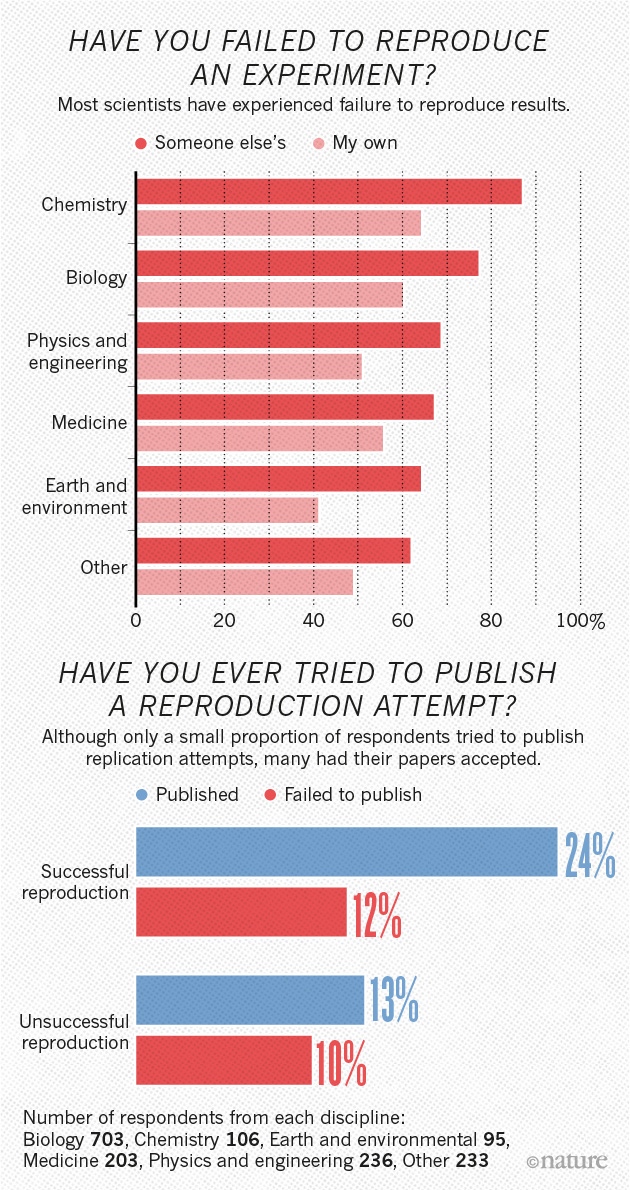

Have you failed to reproduce an experiment from your lab or someone else’s?

Open science meets developmental research

- Center for Open Science (COS) asked SRCD to adopt the Transparency & Openness Promotion (TOP) Guidelines

TOP guidelines

- Citation standards

- Data, analytic methods (code), and research materials transparency

- Preregistration of studies

- Pregistration of analysis plans

- Replication

- SRCD Governing Council asked Publications Committee to address the request

- Publications Committee requested a task force be formed

Task Force Members

- Lisa Braverman (SRCD)

- Pamela Cole (Penn State); Publications Committee Chair

- Lisa Gennetian (NYU); Task Force Chair 2017; Associate Editor Child Development

- Rick Gilmore (Penn State); Task Force Chair 2017-2018; Co-Founder/Co-Director Databrary.org

- Chuck Kalish (SRCD)

- Judith Smetana (Rochester); Editor, Child Development Perspectives

- Catherine Tamis-LeMonda (NYU), Governing Council

- Marcel van Aken (Utrecht); Executive Committee, ISSBD

- Suman Verma (Panjab University); SRCD International Affairs Committee

- Carol Worthman (Emory University), Governing Council

SRCD responds

Survey of CD first authors regarding data & materials sharing

From a six-month period in 2016-2018, \(n=36\) authors contacted and \(n=24\) responded.

| Question | Early career | Senior |

|---|---|---|

| Any prior experience with posting/sharing data? | 40% | 30% |

| Does your institution provide resources for preparation/posting of data? | 80% | 80% |

| What, if any, of the below concerns do you have about data sharing if CD were to require data from published studies be made available to other researchers? | Early career | Senior |

|---|---|---|

| IRB | 50% | 89% |

| Jeopardizing trust of participants/communities/violating consent | 90% | 77% |

| Risk of scooping planned research | 50% | 56% |

| Taking time away from my research | 50% | 56% |

Concerns rated lower in importance: financial cost, fairness/equal partnership, trust in collaboration beyond data sharing.

Motivations for action

- SRCD behind the curve

- Can build on emerging best practices

- Craft policies and procedures tailored to our community

- Avoid exacerbating inequity

New policies (effective April 1, 2019)

- Guidelines for Child Development authors

- Policy statement

“The advancement of detailed and diverse knowledge about the development of the world’s children is essential for improving the health and well-being of humanity…”

“We regard scientific integrity, transparency, and openness as essential for the conduct of research and its application to practice and policy…”

https://www.srcd.org/about-us/policy-scientific-integrity-transparency-and-openness

What are Scientific Integrity, Openness, & Transparency?

“By scientific integrity, we mean the ‘active adherence to the ethical practices and professional standards essential for the responsible practice of research.’”

“Scientific integrity includes the core values of openness, objectivity, fairness, honesty, accountability and stewardship at every step in the scientific enterprise.”

“By transparency, we mean the clear, accurate, and complete reporting of all components of scientific research.”

“Transparency includes, but is not limited to, reporting the following: participant characteristics, how participants were identified, recruited, and screened, and by what criteria they were included or excluded; how and when participants were tested, measured, or observed…”

“…what apparatus, equipment, or instruments were employed; what transformations the measures or observations underwent; what material and financial resources supported the research.”

“By openness, we refer to the sharing of scientific resources, such as methods, measures, and data, in order to further scientific advances.”

“Scientific openness ranges from provision of materials to other scientists, at no cost or specific obligation, to the depositing of scientific data in data sharing repositories”

Your thoughts?

Issues

Increase in risks to participants

- How can I share data without jeopardizing trust of participants/communities or violating consent?

- How can confidentiality of shared data be protected?

- Do participants understand risks of data sharing?

- How do I talk to participants?

Problems for ethics review

- Must minors be reconsented when they reach adulthood?

- Can future secondary uses be adequately described?

- What about differences among developed/developing world, U.S. vs. Europe, etc.?

Burdens on researchers

- Preparing data & materials to share takes time

- Unfair burden on researchers with limited resources

- Inconsistent institutional infrastructure & support

- Who bears cost of curation?

Risks to researchers

- Won’t other researchers ‘scoop’ me?

- Errors will become more readily known and possibly subject to criticism

- Early adopters will bear the brunt

Risks to researchers

- Openness not yet a widespread criterion for promotion

- Is ‘openness’ more important than other evaluative criteria?

Burdens on reviewers, editors, & publishers

- Must shared analysis code be reviewed alongside manuscript?

- Must publishers provide infrastructure for sharing?

Restrictions on scientific innovation & impact

- Mandating preregistration may limit exploratory analyses, constrain ‘discovery’ science

- Do multiple uses of the same data increase the risk of false positive findings

Ensuring equity & embracing diversity

- Does emphasizing openness devalue diverse modes of scholarship?

- How do we avoid disadvantaging scholars from institutions outside Europe and North America?

- How do we ensure equitable access to data, materials, & expertise?

Addressing the issues

Protecting participants

| Issue | Response |

|---|---|

| Can confidentiality of shared data be protected? | Yes! |

| e.g., Databrary successfully stores identifiable video + other data | |

| Identifiable data sharing requires participant permission | |

| Repositories ‘safer’ than individual websites, institutional archives | |

| Store in repository with restricted access | |

| e.g., ICSPR largest and oldest data repository in social sciences |

| Issue | Response |

|---|---|

| Unforseeable risks of ‘indefinite’ storage? | Seek permission to share using standard templates |

| Data reuse maximizes benefits of participation |

| Issue | Response |

|---|---|

| Can data be truly ‘de-identified’? | Consider restricted data sharing even for ‘de-identified’ data |

| Issue | Response |

|---|---|

| Do participants understand sharing risks? | Perhaps not fully, but we can minimize risks |

| Risks must be balanced with benefits (Brakewood & Poldrack, 2013) | |

| Many fields have long & successful histories of sharing data | |

| Virtues of standard templates for seeking permission |

| Issue | Response |

|---|---|

| How do I talk to participants about sharing? | Databrary has sample scripts and video examples |

Supporting researchers

| Issue | Response |

|---|---|

| Preparing data & materials to share takes time | Prepare to share from the beginning of a study |

| Inconsistent institutional infrastructure & support | Researchers & SRCD can advocate for more support, provide infrastructure & training |

| Issue | Response |

|---|---|

| Unfair burden on researchers with limited resources | SRCD policy recommends sharing, but does not require |

| SRCD training & technical assistance | |

| Use and support ‘free’ data repositories (Databrary, ICSPR, OSF) |

| Issue | Response |

|---|---|

| Who bears cost of curation? | Repositories can help |

| Need stronger support from funders | |

| Reproducible workflows and tools (RMarkdown; Jupyter notebooks) make curation easier | |

| NICHD grants for curation |

Upholding ethical commitments

| Issue | Response |

|---|---|

| Must minors be reconsented when they reach adulthood? | Open question; NICHD says not if overly burdensome + low risk; But different in EU |

| Can future secondary uses be adequately described? | Better practice is to specify future uses in generic terms |

| Issue | Response |

|---|---|

| Differences among developed/developing world, U.S. vs. Europe, etc. | SRCD should work with others toward common standards; Databrary consent one starting point |

Protecting researchers

| Issue | Response |

|---|---|

| Other researchers will ‘scoop’ me | Share when ready (publication goes to press or end of grant period) |

| Doesn’t seem to happen often in other fields that share data more often. | |

| Require citation of data & materials |

| Issue | Response |

|---|---|

| Errors will become more readily known and possibly subject to criticism | Criticing the work vs. criticizing the worker |

| Early adopters will face the brunt | SRCD recommends, but does not require materials, data, code sharing |

“SRCD espouses practices that minimize potential harm to contributing participants, researchers, and the public. The value of minimizing harm takes priority over the values of increased scientific transparency and openness.”

“SRCD further acknowledges the need to protect researchers from professional harm that can occur when requests for scientific transparency and openness veer into attacks on the integrity of researchers themselves or result in significant, new, or unfunded burdens that limit progress in scholarship.”

Supporting reviewers, editors, & publishers

| Issue | Response |

|---|---|

| Must shared analysis code be reviewed alongside manuscript? | Review of materials, data, code optional |

| Must publishers provide infrastructure for sharing? | Possible partnerships with data repositories |

Enhancing scientific impact and accelerating discovery

| Issue | Response |

|---|---|

| Mandating preregistration may limit exploratory analyses | Sharing, preregistration, replication recommended and publicized but not required |

| Do multiple uses of the same data increase the risk of false positive findings | Different analysts may approach same question from different perspectives |

| Preregistration can help |

| Issue | Response |

|---|---|

| Does emphasizing openness devalue diverse modes of scholarship? | SRCD embraces diversity in scholarship |

| One size does not fit all |

Embracing an open future

This is a conversation

- …not just for this hour

- SRCD guidelines recommend but do not require sharing, preregistration, etc.

- Gathering data for future discussion

TOP guidelines

- Citation standards

- Data, analytic methods (code), and research materials transparency

- Preregistration of studies

- Pregistration of analysis plans

- Replication

SRCD’s guidelines for CD authors

- Citation standards

- Research materials transparency

- Design and analysis transparency

- Data and analytic methods (code) transparency

- Preregistration of studies and analysis plans

- Replication

Citation Standards

“SRCD values diverse forms of research, including those carried out on primary data collected by researchers themselves and on secondary data from data repositories, public or non-public sources (e.g., non-author collaborators), and private or proprietary data.”

“Authors should appropriately cite all data sources, program code, materials, and methods used in research.”

Research Materials Transparency

“Sharing materials used in research in child development, including questionnaires, stimuli, coding systems, and so forth, is vital to maximize the reproducibility of findings, improve scientific rigor, and develop new knowledge.”

“…sharing the materials used in research is central to promoting the extension and replication of research results.”

“Sharing can also help to promote greater equity by making materials available to researchers who have fewer resources. Thus, SRCD strongly encourages the sharing of research materials with other researchers to the fullest extent possible.”

“1. Information about whether or not authors agree to make research materials available is not considered as part of the review process but will be collected post-acceptance, prior to publication.”

“2. If an author agrees to make research materials available, the author should specify where that material will be made available and for what time period. Ideally materials that are not copyrighted should be placed in free, open repositories (e.g., Databrary, Dataverse, Dryad, ICSPR, OpenNeuro, OSF) and given persistent identifiers (e.g., DOI’s) to facilitate their citation and use indefinitely. For materials that are copyrighted, a link to the specific measure should be provided.”

“3. Authors agreeing to share research materials should, in the acknowledgments or the first footnote of the published paper, indicate that they will make their research materials available to other researchers and provide information about where the materials may be accessed.”

“4. SRCD would like to learn more about the barriers to transparency. Therefore, if research materials are not shared, authors will be asked to provide information during the process of preparing an accepted manuscript for publication about the reasons why research materials are not being shared. That information will be used to evaluate and improve SRCD’s policies, practices, and services, and will not be included as part of the final publication.”

Design and Analysis Transparency

“Transparency in the design and analysis of studies is vital even though changes in the context and timing of studies can complicate issues of reproducibility in child development research.”

“Authors should fully document participant characteristics, how participants were identified, recruited, and screened; by what criteria they were included or excluded; how and when participants were tested, measured, or observed;…”

…what apparatus, equipment, or instruments were employed; how human coders or observers (if any) were trained; and how reliability was estimated; among other facets of research."

“In addition to traditional text- and image-based procedural documentation, video recordings of empirical procedures may improve the transparency of some forms of child development research.”

Data and Analytic Methods (Code) Transparency

“Widespread sharing of data associated with research in child development accelerates the pace of discovery and improves scientific rigor.”

“In some cases, shared data are essential to verify or confirm the specific findings reported in a publication (to demonstrate reproducibility) and can be built upon by other researchers who aggregate findings across the scientific literature (to validate a finding through replication).”

“SRCD strongly encourages members and authors in the society’s journals to share data openly with other researchers, without restrictions or conditions, whenever doing so poses minimal risks to participants and does not violate contractual obligations (e.g., copyright).”

“…it is important to specify how raw data were prepared for statistical analyses; what transformations measures or observations underwent (including scale construction, aggregation levels, outliers); and the steps involved in data analysis…”

“…The use of scripts or analysis code makes documenting these sorts of data analytic processes more transparent and reproducible. SRCD strongly encourages their use.”

“SRCD recognizes that achieving open sharing of diverse types of data will require flexibility based on a variety of considerations including the forms of shared data, participant consent, investigator resources, skill development, institutional barriers or facilitators, and so forth.”

“1. Information about whether or not authors agree to make data available and/or make their analytic methods available is not considered as part of the review process but will be collected post-acceptance, prior to publication.”

“2. If an author agrees to make data and/or analytic methods available, the author should specify where that material will be available. The use of free web-based data and materials repository services (e.g., Databrary, Dataverse, Dryad, ICSPR, OpenNeuro, OSF) is strongly encouraged. Analytic methods may be shared in a data repository in a code repository like GitHub, BitBucket, or SourceForge.”

“3. If data and/or analytic methods are not shared, authors will be asked to provide information during the process of preparing an accepted manuscript for publication about the reasons why data and/or analytic methods are not being shared. That information will be used for internal SRCD purposes, and will not be included in the final publication.”

“4. Authors agreeing to share data and/or analytic methods (code) should, in the acknowledgments or the first footnote of the published paper, indicate that they will make these materials available to other researchers and provide information about where the materials may be accessed.”

Preregistration of Studies and Analysis Plans

“The preregistration of analysis plans or entire studies can be a powerful way to improve the rigor of certain forms of child development research, especially hypothesis-driven experimental studies.”

“SRCD encourages researchers to preregister analysis plans wherever they are appropriate for their specific questions.”

“However, support for preregistration should not be viewed as diminishing the value or importance of thoroughly documented exploratory investigations or descriptive research.”

“Preregistration of studies involves registering the study design, variables, and treatment conditions. Including an analysis plan involves specification of sequence of analyses or the statistical model that will be reported. Authors choosing to preregister should indicate which independent, institutional registry was used (e.g., http://clinicaltrials.gov/, http://socialscienceregistry.org/, http://openscienceframework.org/, http://egap.org/design-registration/, http://ridie.3ieimpact.org/).”

“1. Authors should, in acknowledgments or the first footnote, indicate if they did or did not preregister the research with or without an analysis plan in an independent, institutional registry.”

“2. Information about whether an analysis plan was or was not preregistered will be included in the process of review.”

“3. If an author did preregister the research with an analysis plan, the author must: a. confirm in the text that the study was registered prior to conducting the research with links to the time-stamped preregistration(s) at the institutional registry, and that the preregistration adheres to the disclosure requirements of the institutional registry or those required for the preregistered badge with analysis plans maintained by the Center for Open Science.”

“b. report all preregistered analyses in the text, or, if there were changes in the analysis plan following preregistration, those changes must be disclosed with explanation for the changes. c. clearly distinguish in text analyses that were preregistered from those that were not, such as having separate sections in the results for confirmatory and exploratory analyses.”

Replication

“SRCD recognizes the value of conceptually grounded, well-motivated replications of important findings from empirical studies, particularly of research published in Child Development. Accordingly, the society encourages their submission.”

“Changes in the context and timing of studies (e.g. changes in sample characteristics, time of data-collection, etc.) or differences among study populations can complicate questions about the reproducibility and replicability of some forms of child development research…”

“Nevertheless, replication is a standard by which the robustness and validity of certain forms of child development research, especially empirical studies, can be evaluated.”

Continuing the conversation

SRCD policies

https://www.srcd.org/about-us/policy-scientific-integrity-transparency-and-openness

http://srcd.org/author-guidelines-Scientific-Integrity-Openness-ChildDevelopment

Also new Conflict of Interest (COI) and text recycling/plagiarims policies for CD.

How can SRCD support members?

Open Science at SRCD 2019

- Big Ideas to Tackle Small Samples, Thu, March 21, 9:30 to 11:00am, Hilton Baltimore, Level 2, Key 1

- Step by Step: Improving best practices for research with children, Fri, March 22, 1:00 to 2:30pm, Hilton Baltimore, Level 2, Key 4.

Open Science at SRCD 2019

- Making developmental science more open: Successes, obstacles, and solutions, Saturday, March 23, 2:30-4:00 pm, Baltimore Convention Center, Room 309

- ManyBabies: Bringing large-scale collaborative projects to infant development research, Saturday, March 23, 12:45-2:15 pm, Hilton Baltimore, Level 2, Key 4

Further reading

Brakewood, B., & Poldrack, R.A. (2013). The ethics of secondary data analysis: Considering the application of Belmont principles to the sharing of neuroimaging data. NeuroImage, 82, 671–676. Retrieved from http://dx.doi.org/10.1016/j.neuroimage.2013.02.040

Gilmore, R. O. (2016). From big data to deep insight in developmental science. Wiley Interdisciplinary Reviews: Cognitive Science, 7(2), 112–126. Retrieved September 28, 2016, from http://onlinelibrary.wiley.com/doi/10.1002/wcs.1379/full

Gilmore, R.O., Kennedy, J.L., & Adolph, K.E. (2018). Practical solutions for sharing data and materials from psychological research. Advances in Methods and Practices in Psychological Science, 1(1), 121–130. SAGE Publications Inc. Retrieved from https://doi.org/10.1177/2515245917746500

Gilmore, R.O., & Adolph, K.E. (2017). Video can make behavioural science more reproducible. Nature Human Behavior, 1. Retrieved from https://www.nature.com/articles/s41562-017-0128

Goodman, S. N., Fanelli, D., & Ioannidis, J. P. A. (2016). What does research reproducibility mean? Science Translational Medicine, 8(341), 341ps12–341ps12. Retrieved October 9, 2016, from http://stm.sciencemag.org/content/8/341/341ps12

Mischel, W. (2011). Becoming a cumulative science. APS Observer, 22(1). Retrieved from https://www.psychologicalscience.org/observer/becoming-a-cumulative-science

Munafò, M. R., Nosek, B. A., Bishop, D. V. M., Button, K. S., Chambers, C. D., Sert, N. P. du, Simonsohn, U., et al. (2017). A manifesto for reproducible science. Nature Human Behaviour, 1, 0021. Retrieved January 10, 2017, from http://www.nature.com/articles/s41562-016-0021

Peng, R. (2016). A simple explanation for the replication crisis in science · Simply Statistics. Retrieved March 12, 2019, from https://simplystatistics.org/2016/08/24/replication-crisis/

This talk was produced on 2019-03-22 in RStudio version 1.1.453 using R Markdown and the reveal.JS framework. The code and materials used to generate the slides may be found at https://github.com/gilmore-lab/2019-03-22-SRCD-conversation/. Information about the R Session that produced the code is as follows:

sessionInfo()## R version 3.5.2 (2018-12-20)

## Platform: x86_64-apple-darwin15.6.0 (64-bit)

## Running under: macOS Mojave 10.14.3

##

## Matrix products: default

## BLAS: /Library/Frameworks/R.framework/Versions/3.5/Resources/lib/libRblas.0.dylib

## LAPACK: /Library/Frameworks/R.framework/Versions/3.5/Resources/lib/libRlapack.dylib

##

## locale:

## [1] en_US.UTF-8/en_US.UTF-8/en_US.UTF-8/C/en_US.UTF-8/en_US.UTF-8

##

## attached base packages:

## [1] stats graphics grDevices utils datasets methods base

##

## other attached packages:

## [1] qrcode_0.1.1

##

## loaded via a namespace (and not attached):

## [1] Rcpp_1.0.0 revealjs_0.9 digest_0.6.18

## [4] R.methodsS3_1.7.1 magrittr_1.5 evaluate_0.13

## [7] stringi_1.3.1 R.oo_1.22.0 R.utils_2.8.0

## [10] rmarkdown_1.11 tools_3.5.2 stringr_1.4.0

## [13] xfun_0.4 yaml_2.2.0 compiler_3.5.2

## [16] htmltools_0.3.6 knitr_1.21 (

( (

(