An -ome of our own: Toward a more reproducible, robust, and insightful science of human behavior

Rick O. Gilmore

Support: NSF BCS-1147440, NSF BCS-1238599, NICHD U01-HD-076595

2017-01-31 15:58:00

An -ome of our own: Toward a more reproducible, robust, and insightful science of human behavior

Support: NSF BCS-1147440, NSF BCS-1238599, NICHD U01-HD-076595

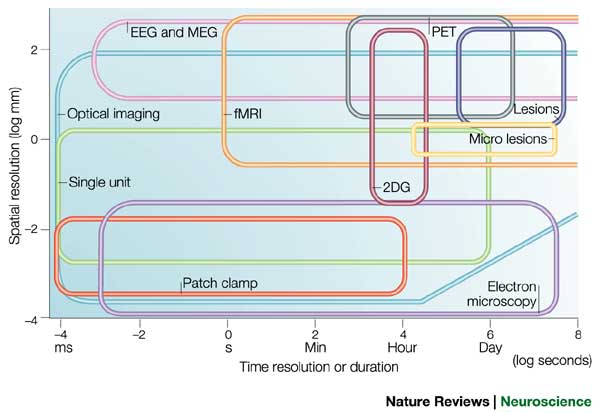

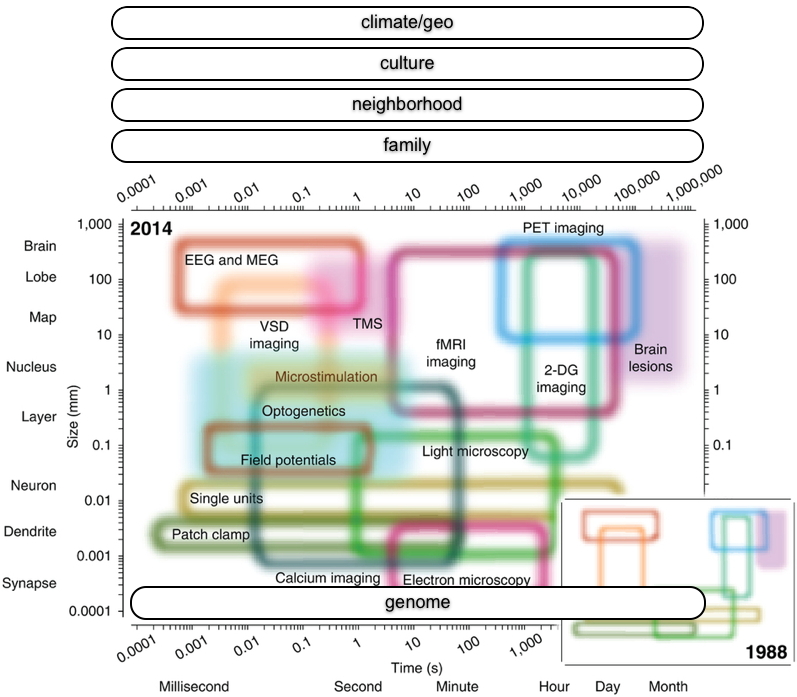

"We have empirically assessed the distribution of published effect sizes and estimated power by extracting more than 100,000 statistical records from about 10,000 cognitive neuroscience and psychology papers published during the past 5 years. The reported median effect size was d=0.93 (inter-quartile range: 0.64-1.46) for nominally statistically significant results and d=0.24 (0.11-0.42) for non-significant results. Median power to detect small, medium and large effects was 0.12, 0.44 and 0.73, reflecting no improvement through the past half-century. Power was lowest for cognitive neuroscience journals. 14% of papers reported some statistically significant results, although the respective F statistic and degrees of freedom proved that these were non-significant; p value errors positively correlated with journal impact factors. False report probability is likely to exceed 50% for the whole literature. In light of our findings the recently reported low replication success in psychology is realistic and worse performance may be expected for cognitive neuroscience."

Videos of empirical procedures can and should be viewed as the gold standard of documentation across the behavioral sciences. Indeed, were the use of video for this purpose more widespread, many disagreements about whether empirical replications truly reproduced the original experimental conditions would be moot (Collaboration, 2015; Gilbert et al., 2016). The power of video to document procedures should also be an attractive solution for scientists in fields that do not commonly collect or analyze video.

Gilmore & Adolph (in press)

Source: http://www.nature.com/articles/s41562-016-0021/tables/1

Shonkoff, J. P., & Phillips, D. A. (Eds.). (2000). From neurons to neighborhoods: The science of early childhood development. National Academies Press.

MeeSearch

Tamis-LeMonda, C. (2013). http://doi.org/10.17910/B7CC74.

Tamis-LeMonda, C. (2013). http://doi.org/10.17910/B7CC74.

'Free' service (email, calendar, search, communications platform) vs.

Help institution, community, society

This talk was produced in RStudio version 1.0.136 on 2017-01-31. The code used to generate the slides can be found at http://github.com/gilmore-lab/soda-2017-01-31/. Information about the R Session that produced the code is as follows:

sessionInfo()

## R version 3.3.2 (2016-10-31) ## Platform: x86_64-apple-darwin13.4.0 (64-bit) ## Running under: OS X El Capitan 10.11.6 ## ## locale: ## [1] en_US.UTF-8/en_US.UTF-8/en_US.UTF-8/C/en_US.UTF-8/en_US.UTF-8 ## ## attached base packages: ## [1] stats graphics grDevices utils datasets methods base ## ## loaded via a namespace (and not attached): ## [1] backports_1.0.4 magrittr_1.5 rprojroot_1.1 tools_3.3.2 ## [5] htmltools_0.3.5 yaml_2.1.14 Rcpp_0.12.8 stringi_1.1.2 ## [9] rmarkdown_1.3 knitr_1.15.1 stringr_1.1.0 digest_0.6.11 ## [13] evaluate_0.10

Baker, Monya. 2016. “1,500 Scientists Lift the Lid on Reproducibility.” Nature News 533 (7604): 452. doi:10.1038/533452a.

Begley, C. Glenn, and Lee M. Ellis. 2012. “Drug Development: Raise Standards for Preclinical Cancer Research.” Nature 483 (7391): 531–33. doi:10.1038/483531a.

Collaboration, Open Science. 2015. “Estimating the Reproducibility of Psychological.” Science 349 (6251): aac4716. doi:10.1126/science.aac4716.

Gilbert, Daniel T., Gary King, Stephen Pettigrew, and Timothy D. Wilson. 2016. “Comment on ‘Estimating the Reproducibility of Psychological Science’.” Science 351 (6277): 1037–7. doi:10.1126/science.aad7243.

Goodman, Steven N., Daniele Fanelli, and John P. A. Ioannidis. 2016. “What Does Research Reproducibility Mean?” Science Translational Medicine 8 (341): 341ps12–341ps12. doi:10.1126/scitranslmed.aaf5027.

Henrich, Joseph, Steven J. Heine, and Ara Norenzayan. 2010. “The Weirdest People in the World?” The Behavioral and Brain Sciences 33 (2-3): 61–83; discussion 83–135. doi:10.1017/S0140525X0999152X.

Lash, Timothy L. 2015. “Declining the Transparency and Openness Promotion Guidelines.” Epidemiology 26 (6). LWW: 779–80. http://journals.lww.com/epidem/Fulltext/2015/11000/Declining_the_Transparency_and_Openness_Promotion.1.aspx.

Maxwell, Scott E. 2004. “The Persistence of Underpowered Studies in Psychological Research: Causes, Consequences, and Remedies.” Psychological Methods 9 (2): 147–63. doi:10.1037/1082-989X.9.2.147.

Munafò, Marcus R., Brian A. Nosek, Dorothy V. M. Bishop, Katherine S. Button, Christopher D. Chambers, Nathalie Percie du Sert, Uri Simonsohn, Eric-Jan Wagenmakers, Jennifer J. Ware, and John P. A. Ioannidis. 2017. “A Manifesto for Reproducible Science.” Nature Human Behaviour 1 (January): 0021. doi:10.1038/s41562-016-0021.

Nosek, B. A., G. Alter, G. C. Banks, D. Borsboom, S. D. Bowman, S. J. Breckler, S. Buck, et al. 2015. “Promoting an Open Research Culture.” Science 348 (6242): 1422–5. doi:10.1126/science.aab2374.

Prinz, Florian, Thomas Schlange, and Khusru Asadullah. 2011. “Believe It or Not: How Much Can We Rely on Published Data on Potential Drug Targets?” Nature Reviews Drug Discovery 10 (9): 712–12. doi:10.1038/nrd3439-c1.

Szucs, Denes, and John PA Ioannidis. 2016. “Empirical Assessment of Published Effect Sizes and Power in the Recent Cognitive Neuroscience and Psychology Literature.” BioRxiv, August, 071530. doi:10.1101/071530.